I am UI-retarded. I don't have it in me to code/draw UI that looks decent. I have a good eye for what looks good and what doesn't, but SNKRX's UI looks like it does because I can't actually make anything better, it's the best I can do. SNKRX has evolved into its own visual genre, with plenty of games inspired by it building on the style (itself coming from a long lineage of such games). Orblike will continue that tradition of visuals-made-by-a-coder-who-can't-draw-for-shit, but I want to be able to do better.

Yesterday, ChatGPT Images 2.0 released and I saw a lot of interesting posts on Twitter about it. It seems like it's a really good image generation model. One post caught my attention:

Create a multi-page (multiple images) brand kit for a brand named NET, based on the uploaded moodboard. 6 slides, including logo, typography, color, art direction, website direction, and social media templates. Generate this as a single image.

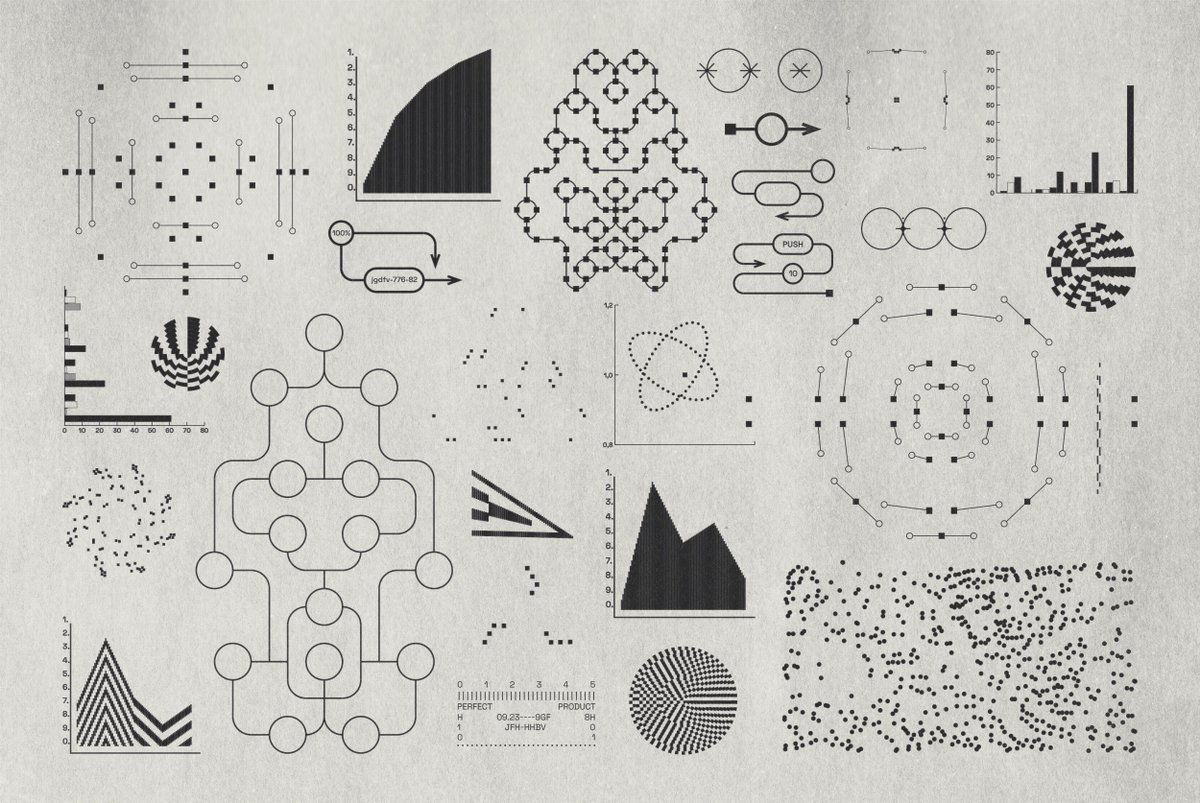

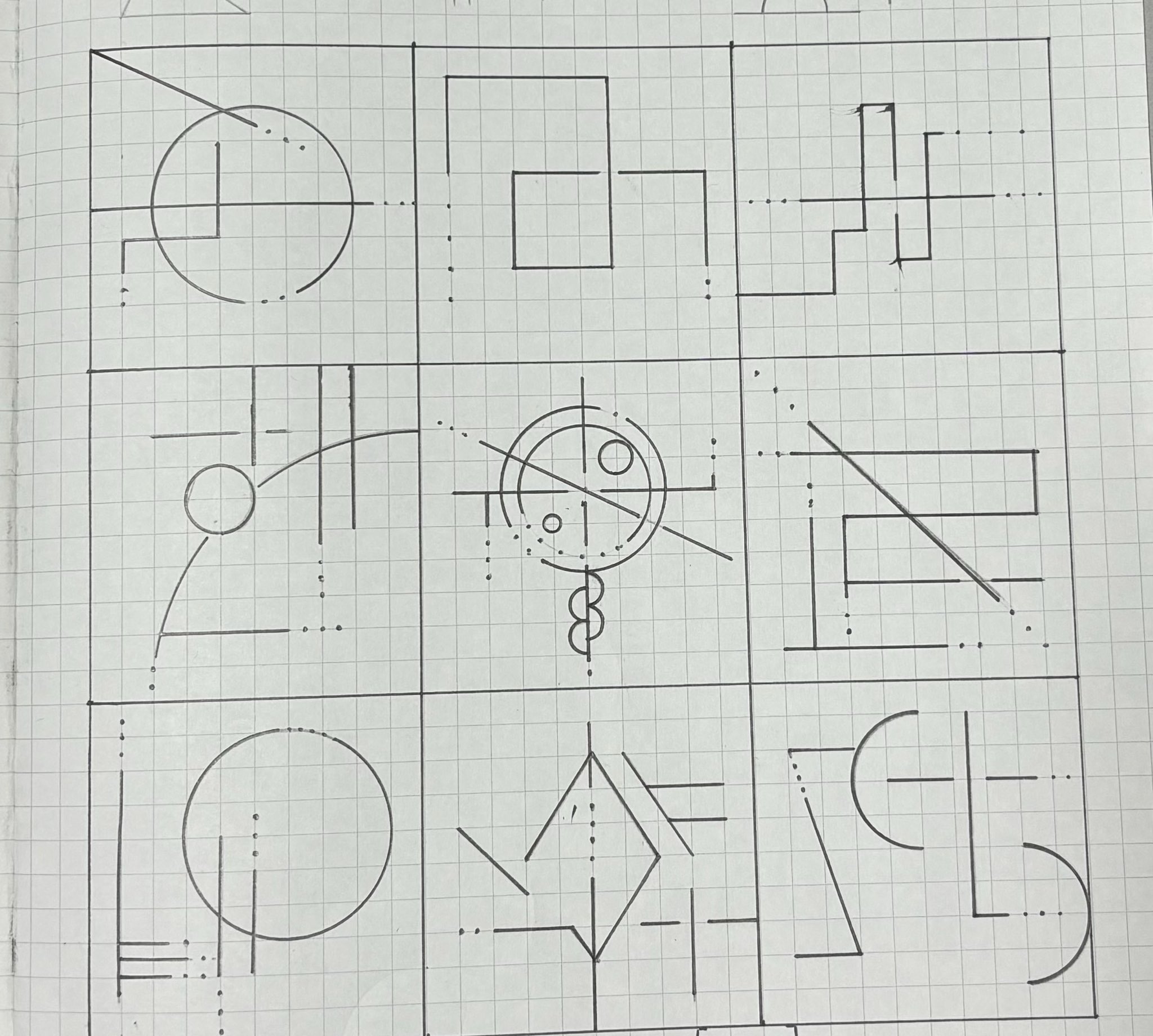

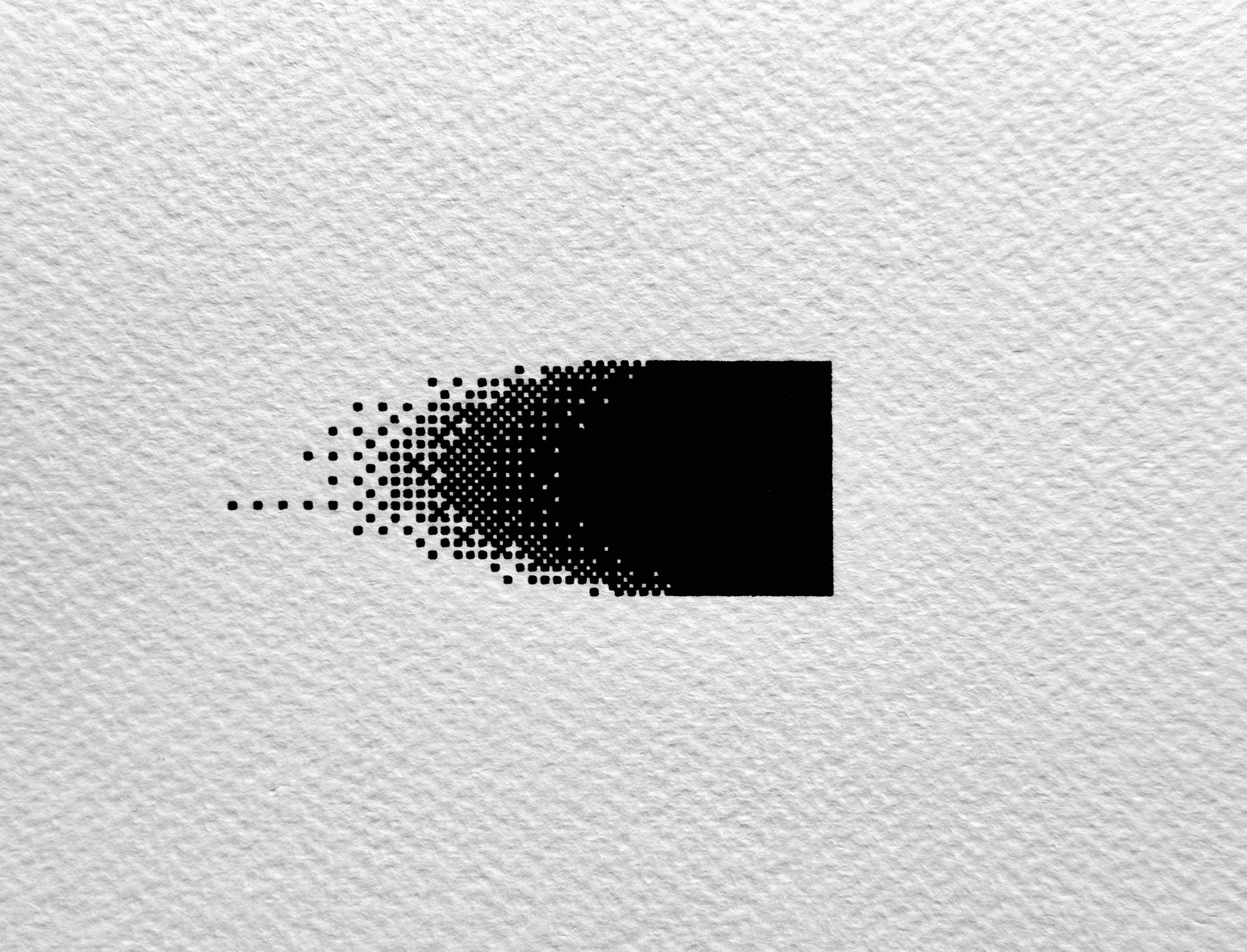

An interesting prompt and I immediately tried it out myself with my own mood images. Going through my Eagle reference library, which is unfortunately 90% populated by images of cute anime boys, I eventually found 3 similar enough images for this purpose:

And then I sent my prompt to chat:

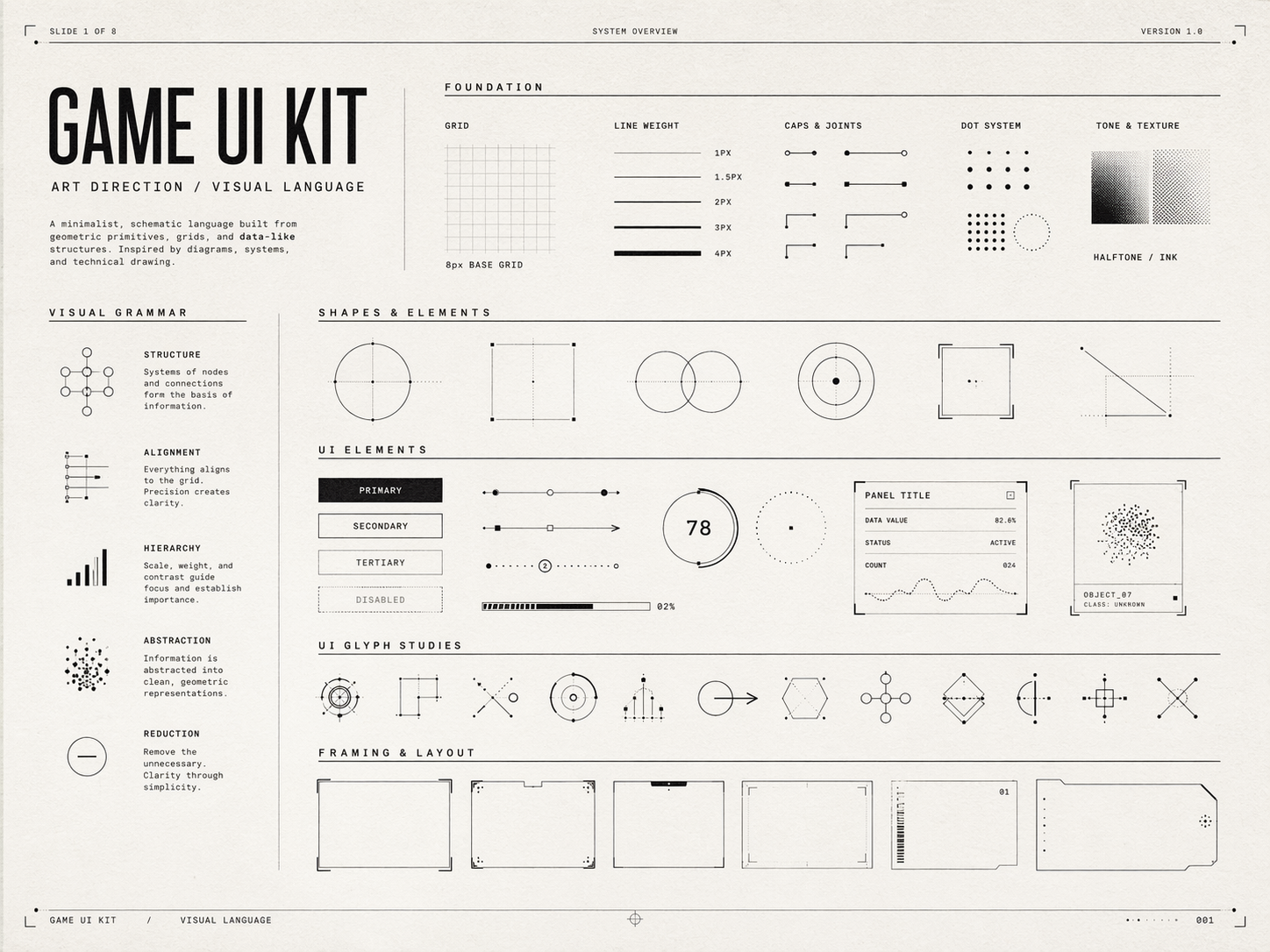

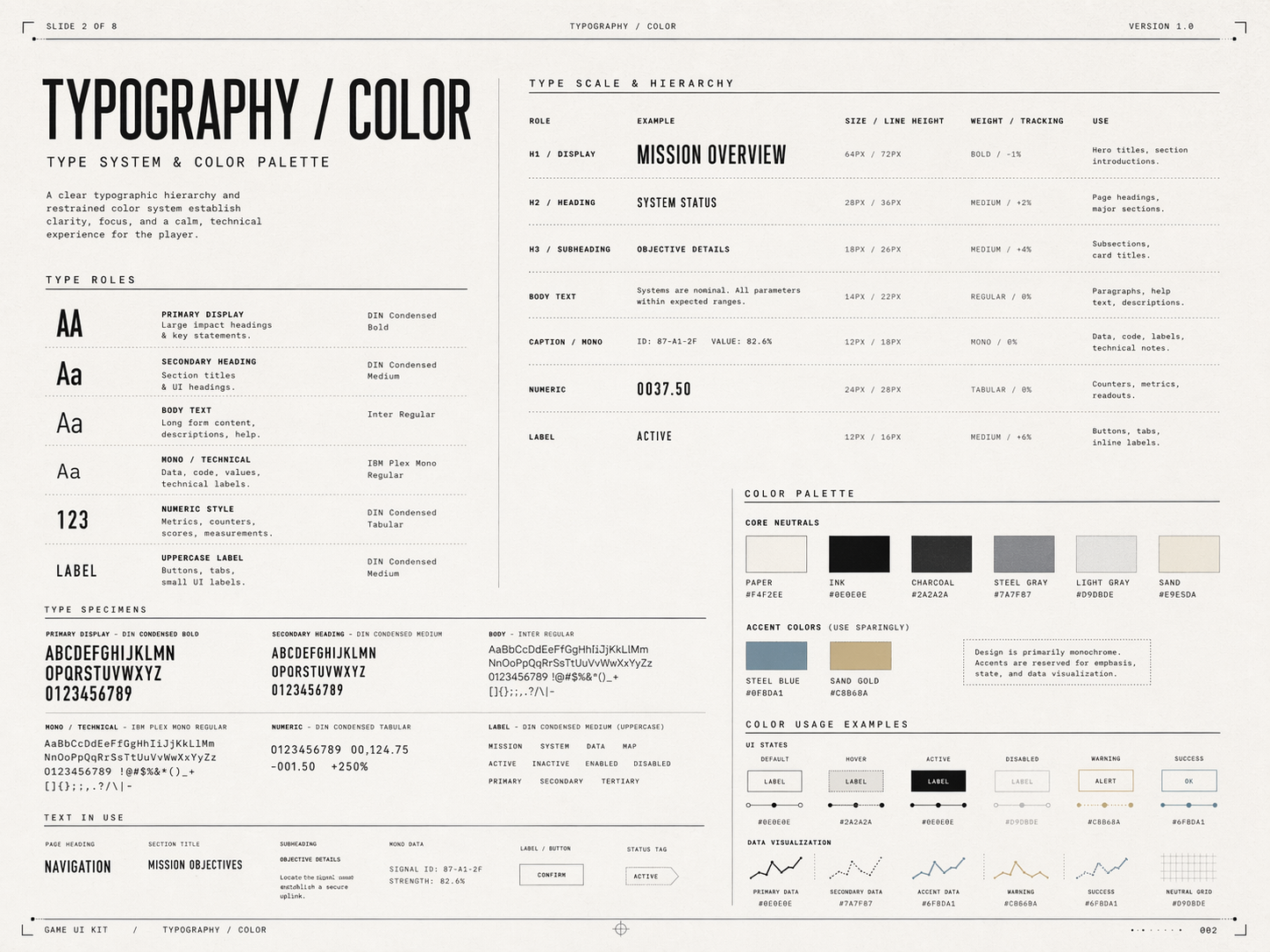

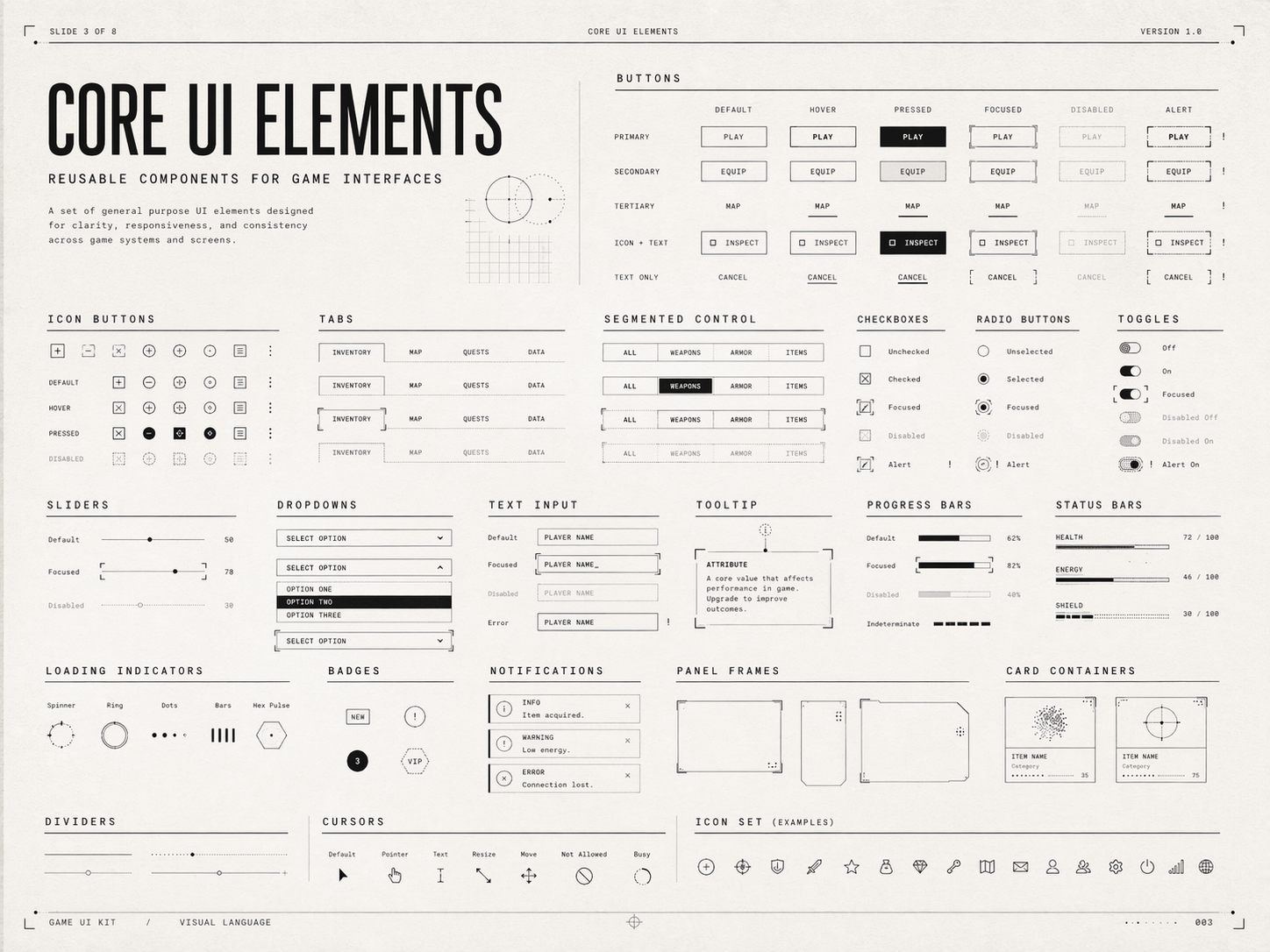

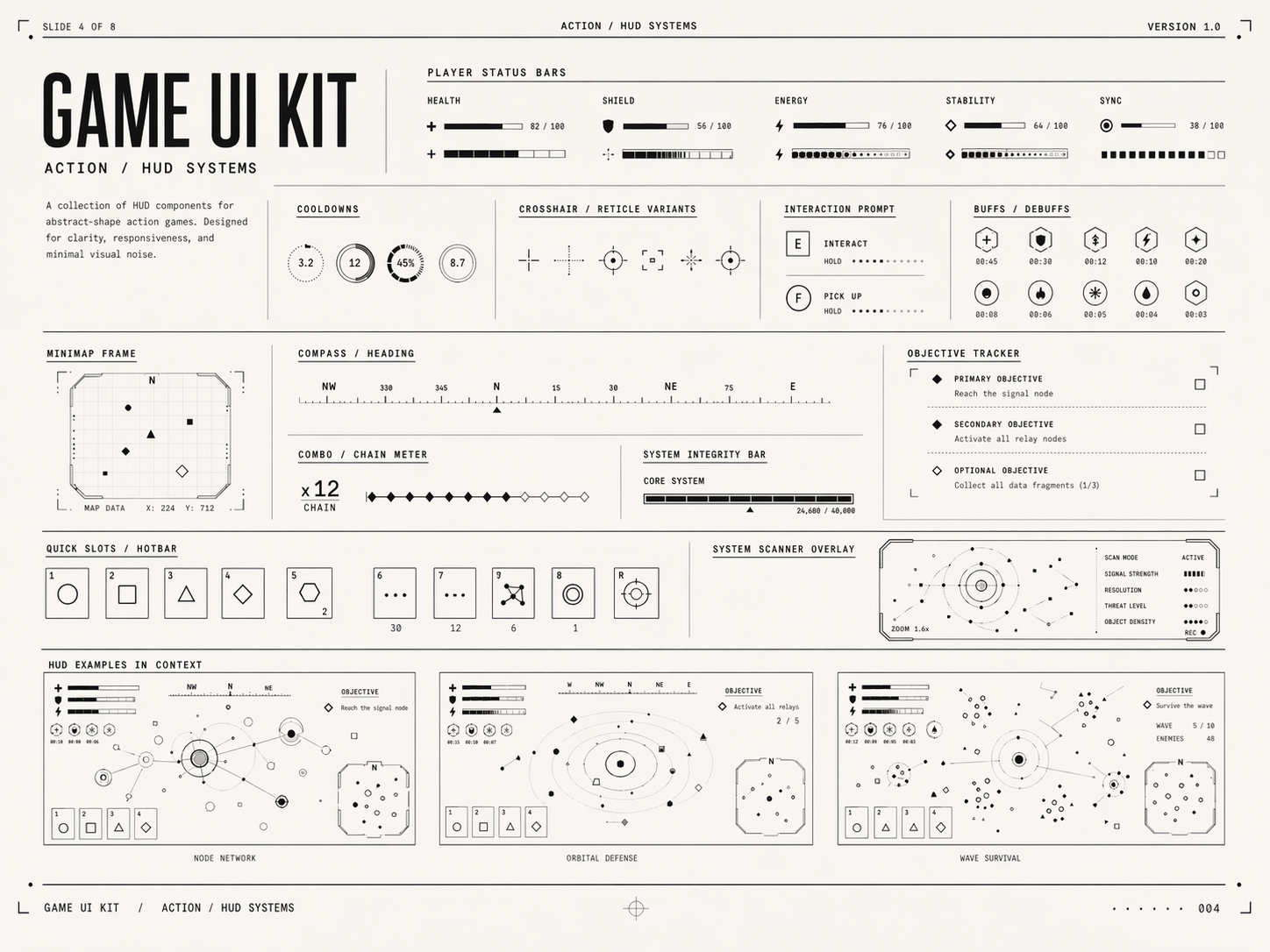

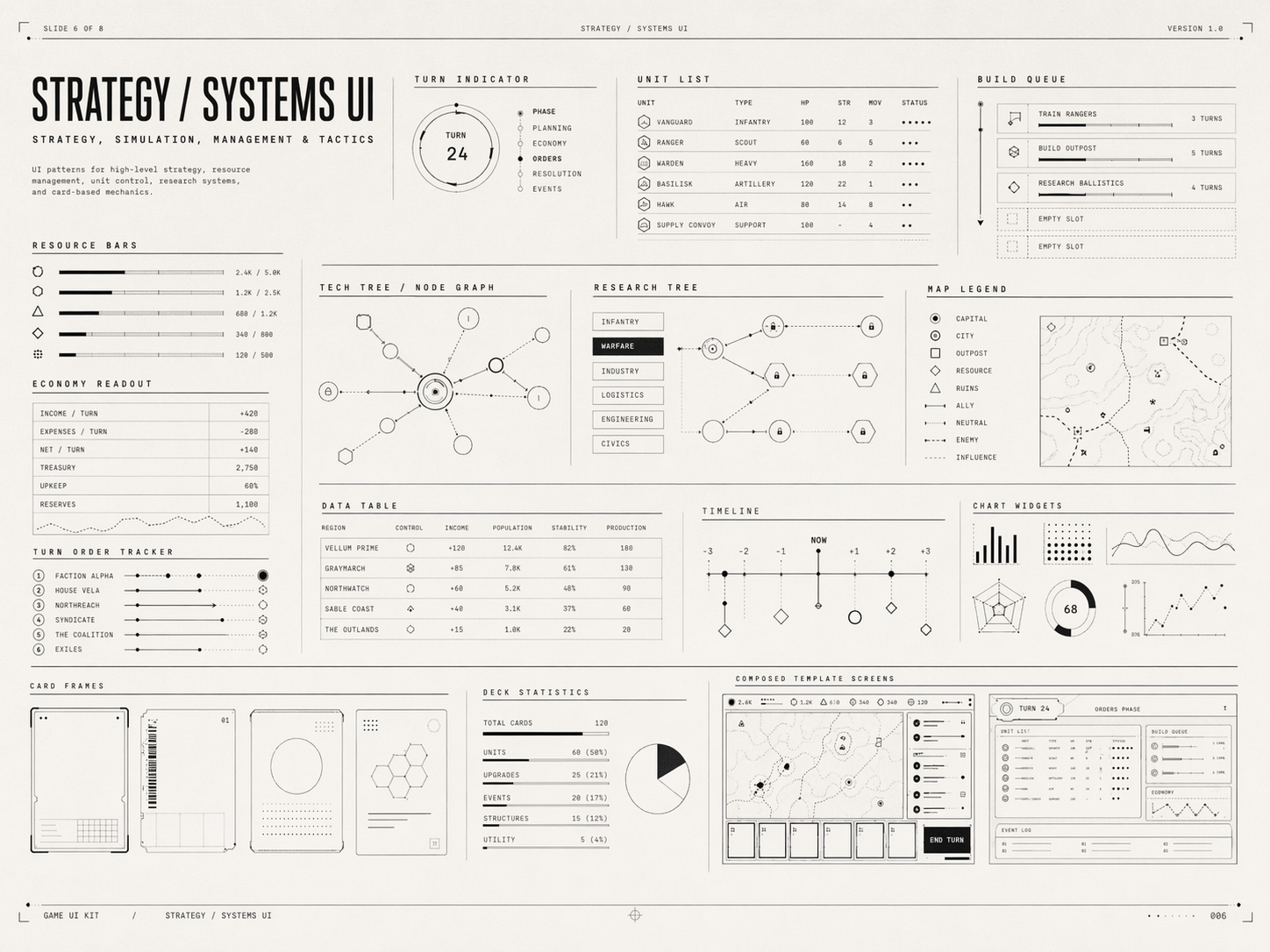

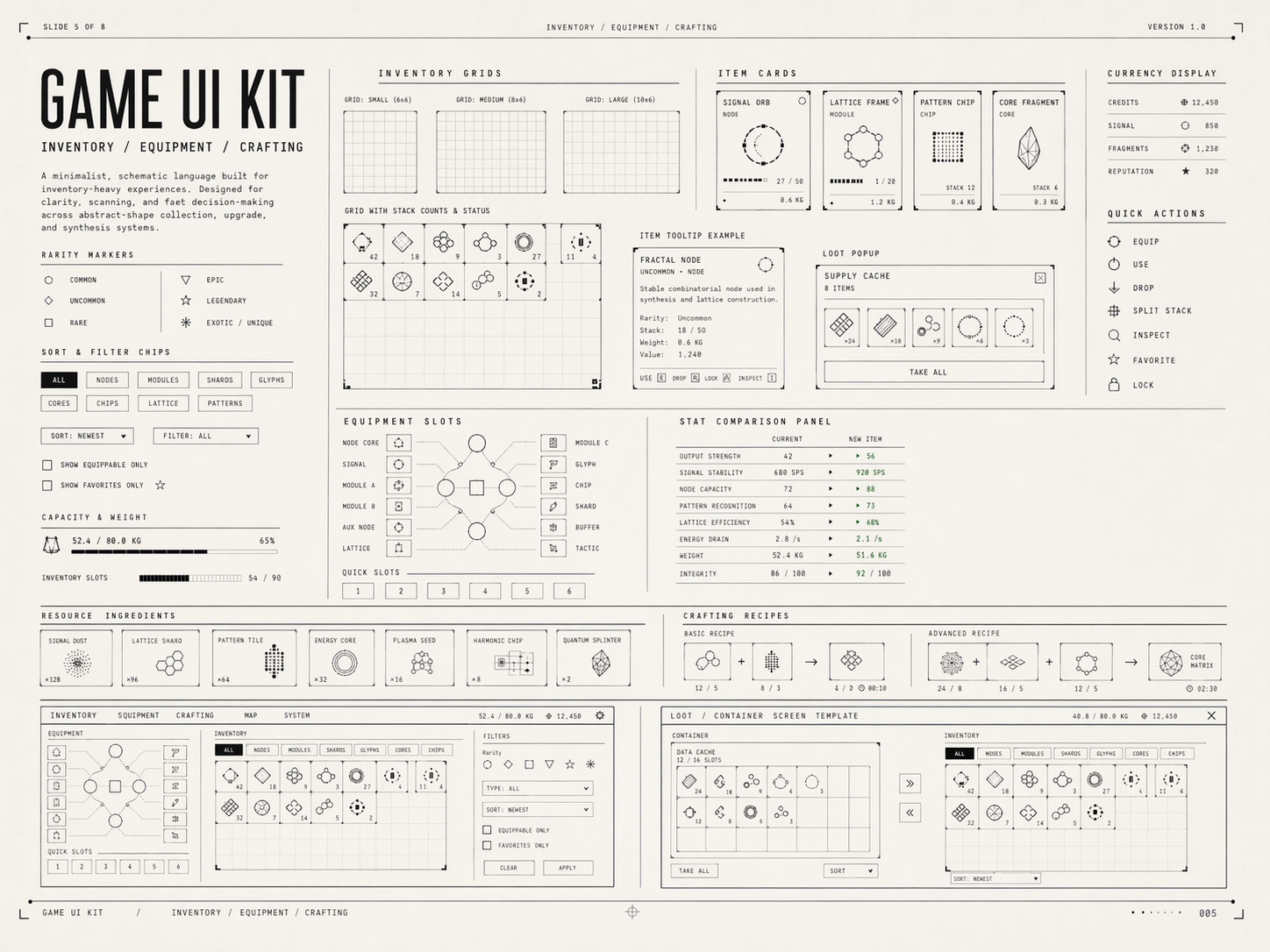

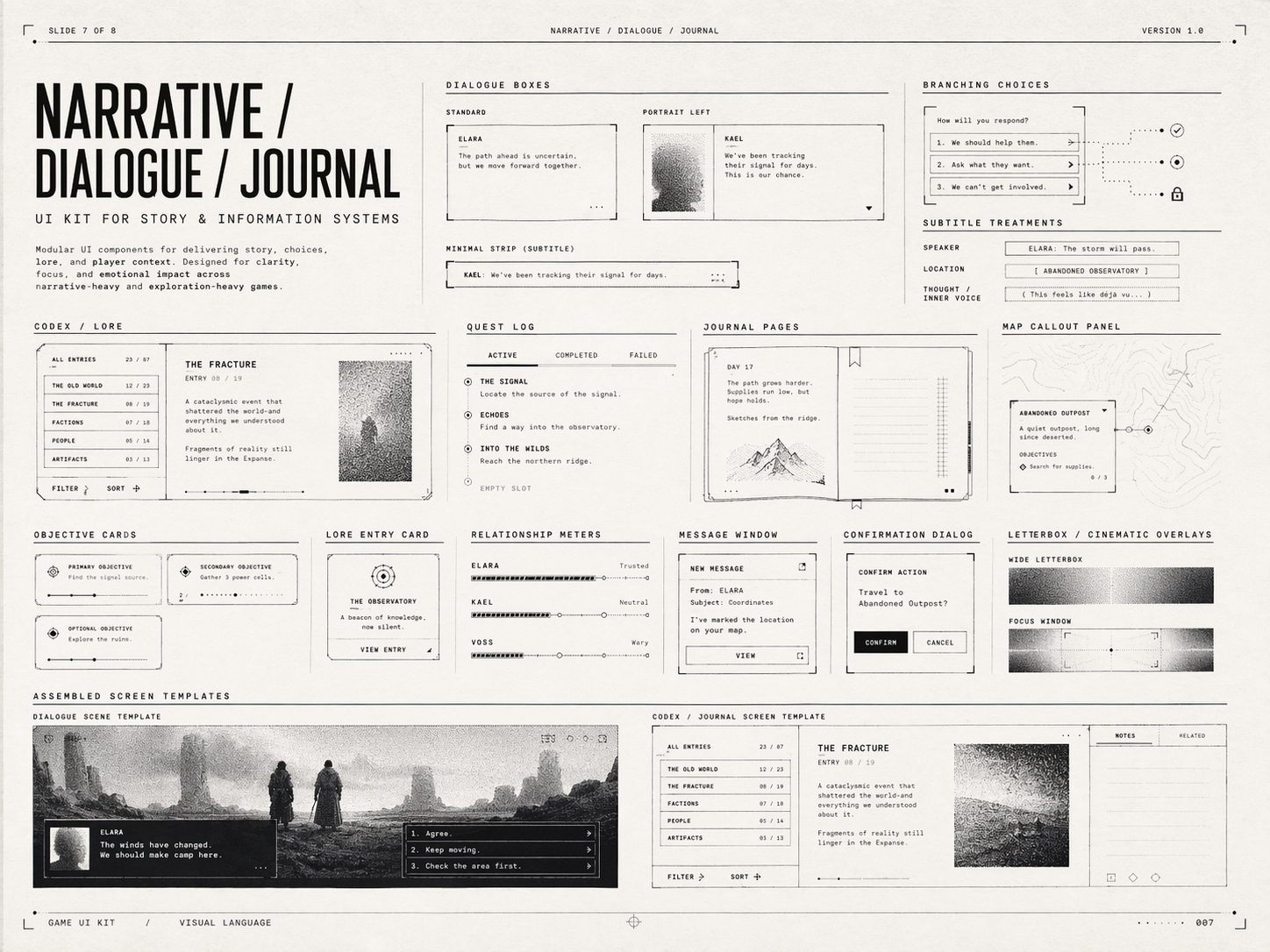

Create a multi-page (multiple images) UI kit for usage in a game, based on the uploaded moodboard images. 8 slides, including general UI elements, typography, color, art direction, specific UI elements for different types of games and purposes, templates (different UI elements put together cohesively).

And it gave me back this (right-click + open in new tab to see in full resolution):

And I was like, wow, that's really really really good, right? I can totally actually code this because it's so simple. Because I primarily use Claude and he can only generate HTML mockups, I sent him these images and he gave me this back:

It looks worse than the images, but the point of the HTML mockup is to force Claude to actually code it, and to then generate a design.md file which contains detailed instructions on how to implement each UI element for next instances. That's exactly what I did, and now all that's left is the actual implementation, where I'll take my time and make sure each element is the best version it can be instead of the slightly fucked up versions Claude created.

And that's the process. I see no reason why this doesn't generalize, since you have control over the mood images as well as the prompt itself, which can be as detailed as you want. I have not tested it with other types of mood images, though, so I don't know how well it works for UI designs that have to be more gamey or less square/grid-like.

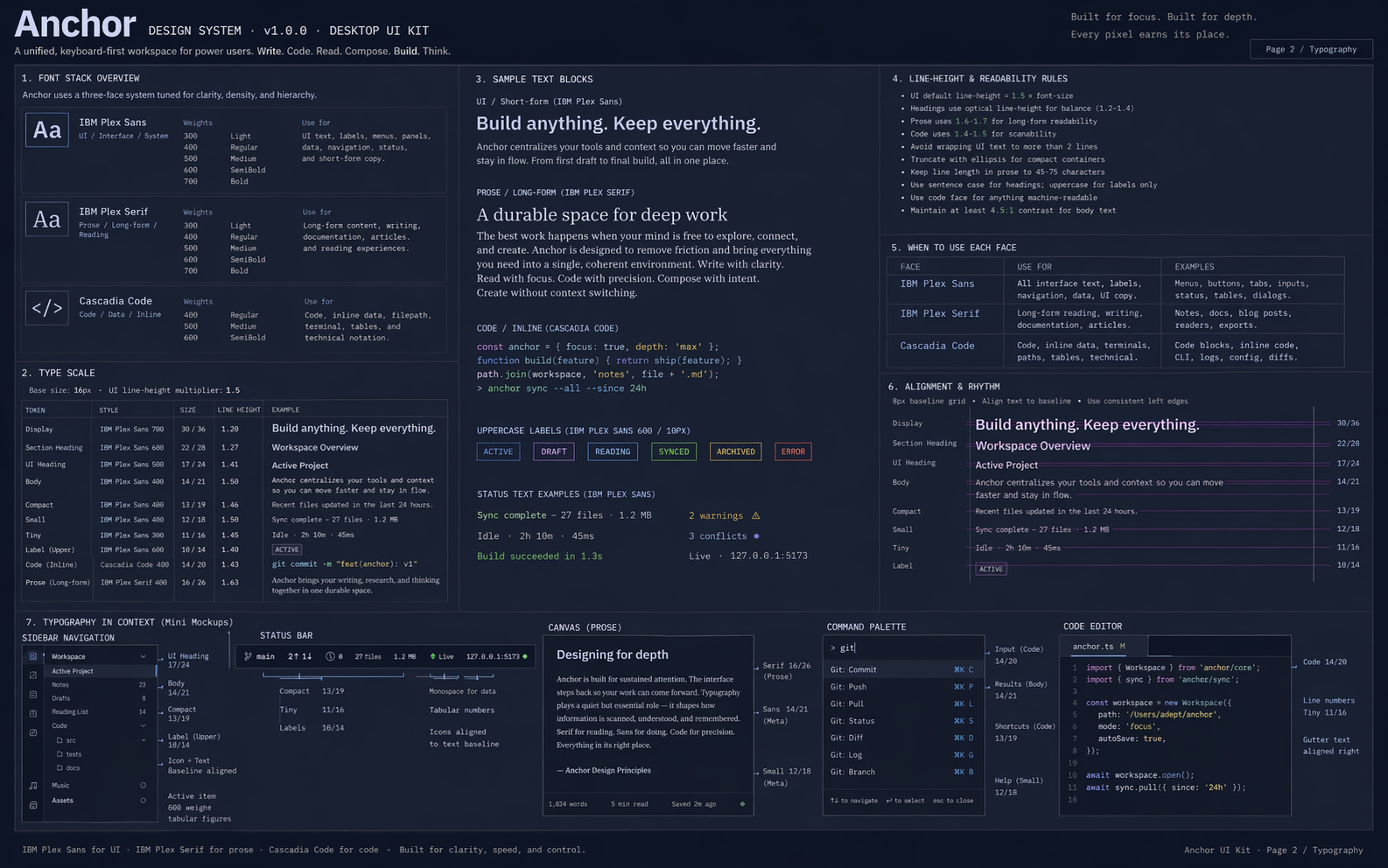

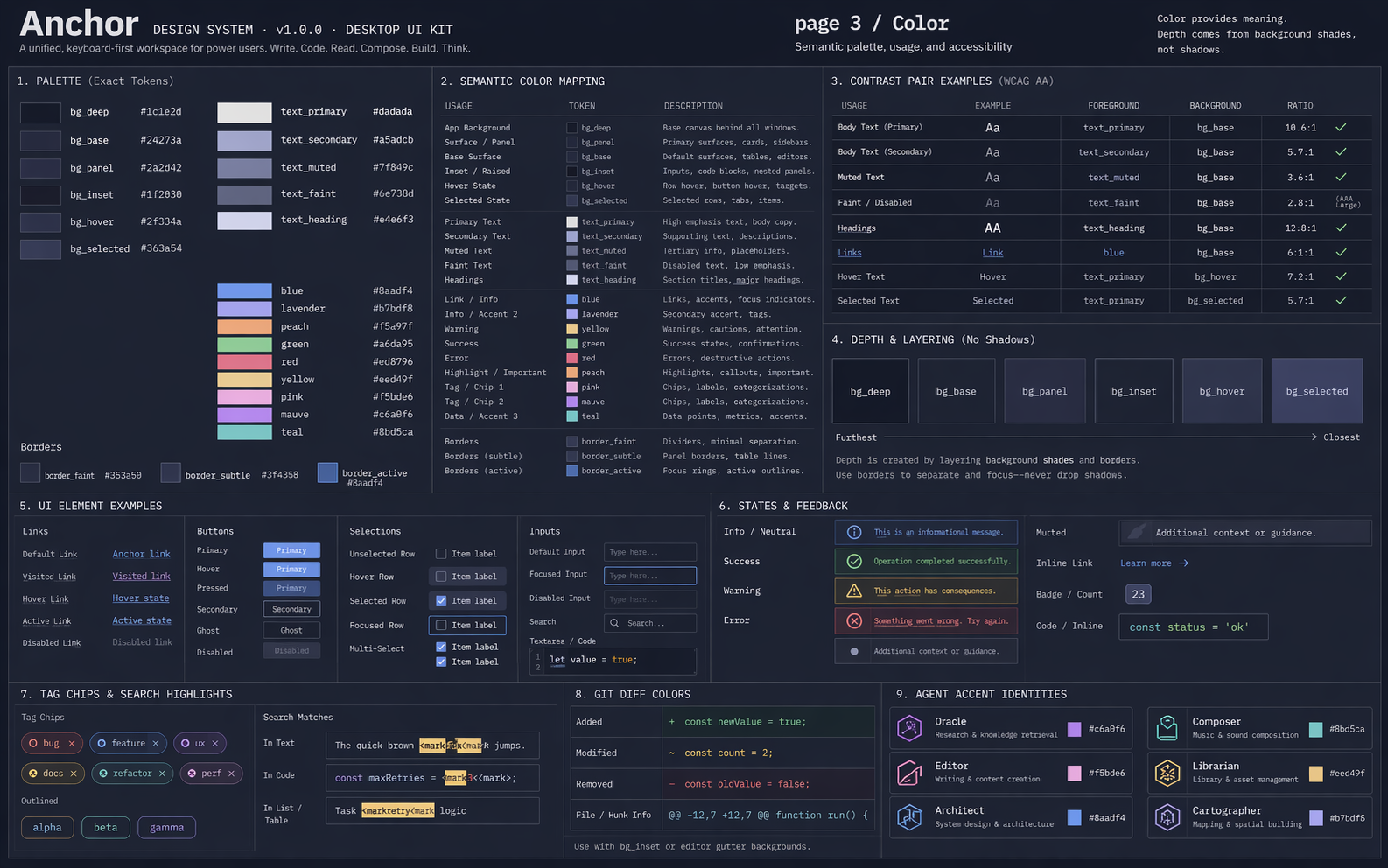

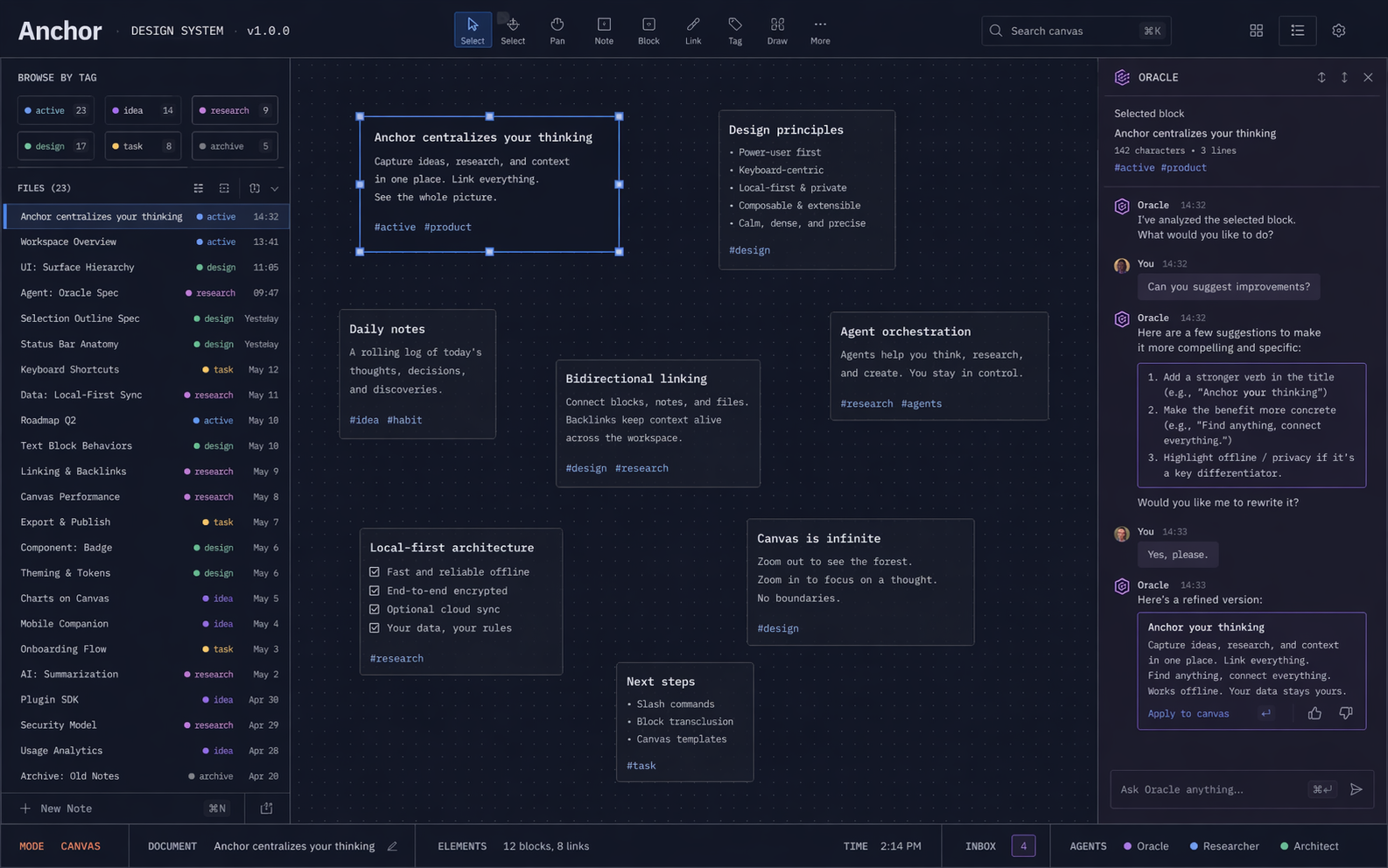

As an additional example, I mentioned I'm making an omega app for everything I do in the future. So I also tried to design for it. I didn't use this process exactly, but it was something close enough to it. Here's the initial Claude mockup:

Claude AnchorApp 1.html

Claude AnchorApp 2.html

I thought this was pretty good already. Interestingly, the mood used here was actually my own dev NeoVim environment. Claude read my NeoVim config, reasoned about what it roughly looked like, then with extra instructions for what the app should be it came up with that, which I thought was really cool. It really does feel like my NeoVim config, so given that this is going to be a personal use app I already feel at home, so to say.

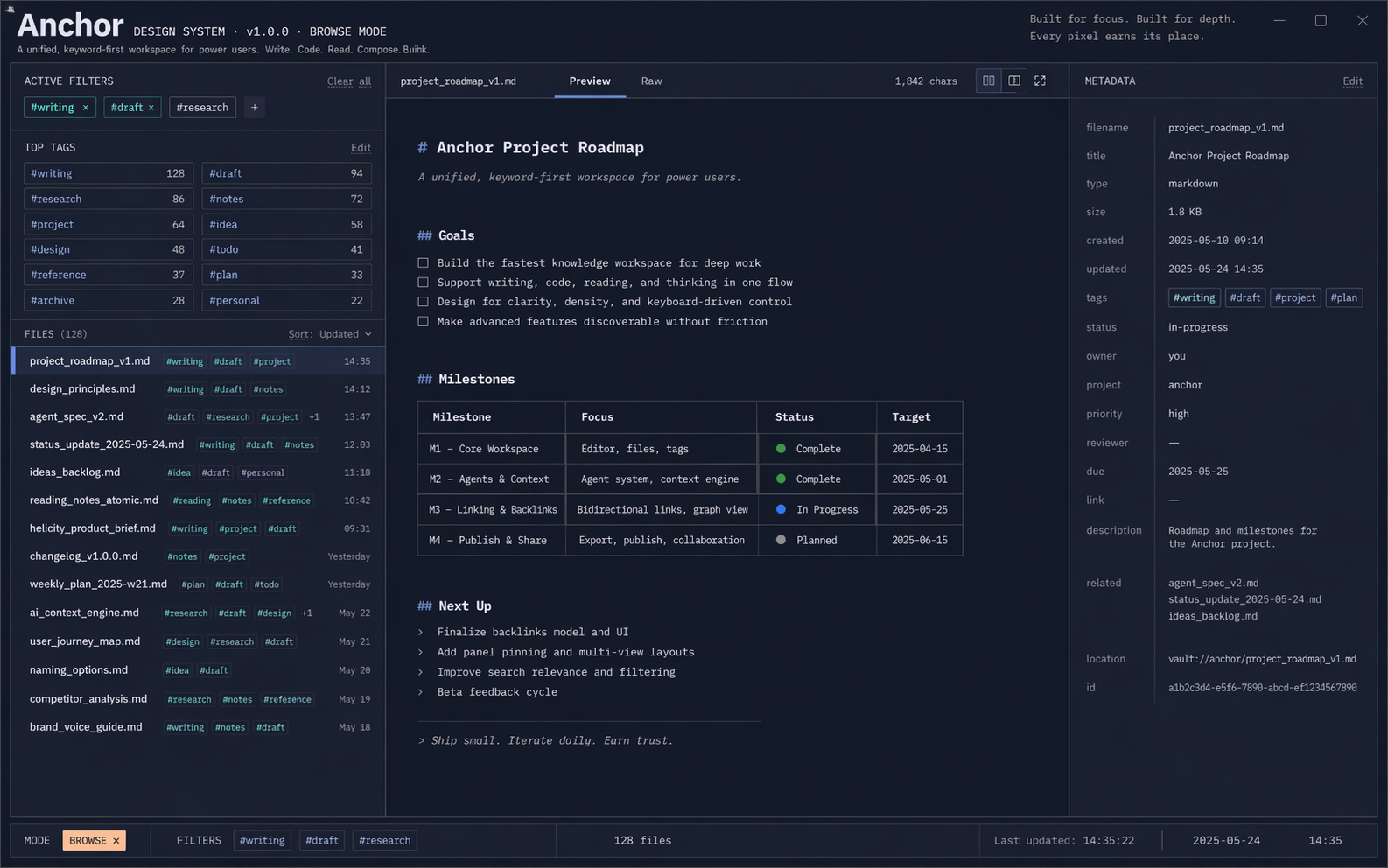

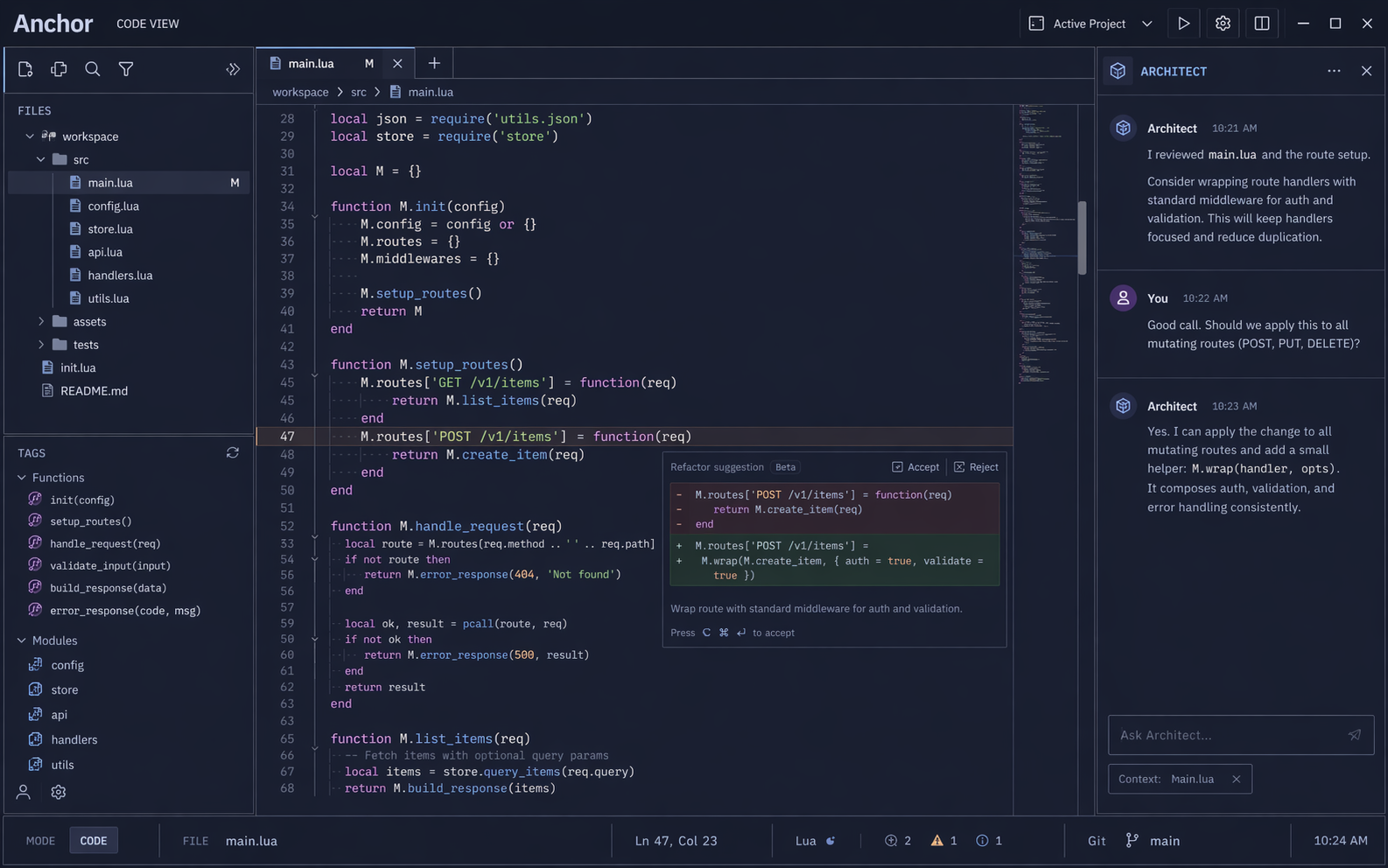

In any case, from these mockups I had Claude generate a design.md file, then fed it to ChatGPT and used a similar prompt as before, and it created these:

Some pretty decent improvements, although some things I like less than the original. But then I fed these into Claude again and it made these:

Claude AnchorApp 3.html

Claude AnchorApp 4.html

Claude AnchorApp 5.html

Claude AnchorApp 6.html

There are elements of these all these mockups that I like more or less, so the final solution will be a mix of everything, but they mostly get it right, I think.

Isn't this wonderful? These models can now do a lot UI-wise! They're a Godsend for someone like me who knows what they like visually but can't actually do it himself. I have yet to implement one of these UIs in actual code, but it's the next step.

It is remarkable that this can happen now. Every once in a while I'll find something that these models can do that really impresses me and I'm genuinely left speechless. This is literal magic, there's no other way to describe it. I think it's important to not lose sight of the fact that we now live in a magical world, and all the responsibilities that come with it.